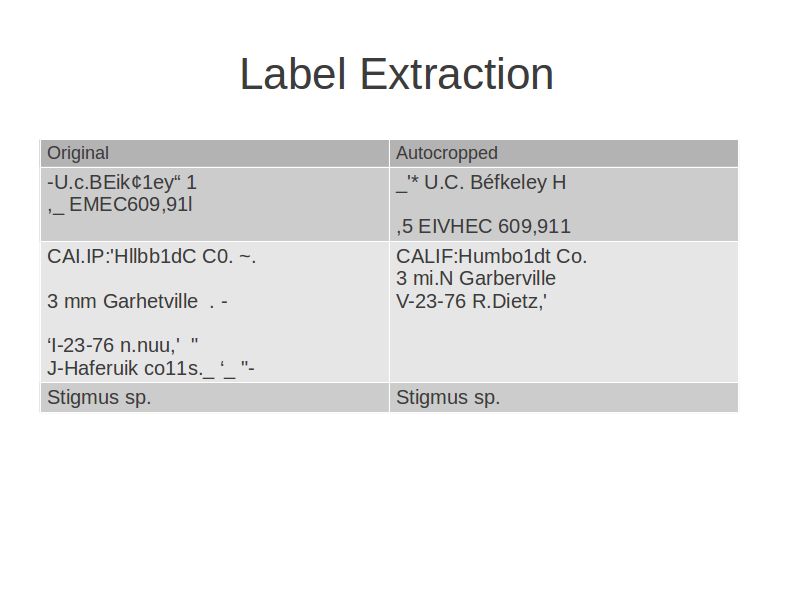

Print(box.content, box.position, box.confidence) Line_and_word_boxes = tool.image_to_string(īuilder=(tesseract_layout=4)įor i, line in enumerate(line_and_word_boxes): # myString is None OR myString is empty or blankĭef extract_info_from_image(image_path: str) -> Person: # myString is not None AND myString is not empty or blank # ret, bin_image = cv2.threshold(image_gs, 200, 255, cv2.THRESH_BINARY)īin_image = cv2.adaptiveThreshold(image_gs, 255, cv2.ADAPTIVE_THRESH_MEAN_C, cv2.THRESH_BINARY, 15, 5) Return cv2.cvtColor(image, cv2.COLOR_RGB2GRAY) Return cv2.cvtColor(cv2.imread(path), cv2.COLOR_BGR2RGB) Here is the code I have working only with IBM cards for now: import datetimeĭef _init_(self, name: str = None, date_of_birth: datetime.date = None, job: str = None, ssn: str = None, Documents are also skewed and it would help if I would be able to deskew them in pre-processing.Īny general point or code snippets for specific parts of pre-processing would help.Other concern is how to make my code generically handle green document with white text (Google ID), white document with dark text (IBM ID) and light yellow document with gray and yellow text (Apple)? I could make three different algorithms but I would still need to decide which one to use on each image.I assume it would greatly help if I would be able tu crop documents from images and ignore backgrounds that way, but I'm not sure how to recognise document from a background, could contours help?.

Tesseract 4.x is expecting dark text on light background, so I tried working with gray image, binary image, even inverted image, but I don't seem to find a solution that would work for all types of document I have. I'm limited to using OpenCV v3, Tesseract v4, Keras, Scikit-Learn and Python 3.6. Since there are many misperceptions of patterns and the like, it seems that it is necessary to apply various restrictions in practical use.I have three different types of ID cards on a different multicoloured backgrounds.įor each card I need to recognise name, company, job, date of birth and social security number of the owner. Then I tried to install tesseract using the command ->pip install tesseract-ocr. Thus, Tesseract OCR (training data) is vulnerable to character tilt and distortion. : No OCR tool found When I searched this error, I found Pyocr looks for the OCR tools (Tesseract, Cuneiform, etc) installed on your system and just tells you what it has found. It seems that patterns and character strings are misrecognized as one character. WordBoxBuilder ( tesseract_layout = 6 )) out = cv2. open ( "" ), lang = "jpn", builder = pyocr. get_available_tools () if len ( tools ) = 0 : print ( "No OCR tool found" ) sys. Import pyocr import pyocr.builders import cv2 from PIL import Image import sys tools = pyocr. It's that simple, isn't it? Try running it This completes the environment construction. * For other environments, please refer to the following. In this article, we will use the usual training data " tessdata". usr/local/Cellar/tesseract//share/tessdataįrom version 4.0.0, you can choose " tessdata_best" which emphasizes " tessdata_fast" accuracy with emphasis on speed. In the case of Homebrew, it ends with brew install tesseract.ĭL the training data from the link above and store it below.

You can use various OCR tools from Python programs.Ĭurrently, the following three types of OCR tools are supported. "PyOCR" is an OCR tool wrapper for Python. It supports Unicode (UTF-8) and can recognize more than 100 languages "as is".

"Tesseract OCR" is an open source OCR engine developed by Google and HP. This time, I tried OCR (optical character recognition) using " Tesseract OCR" and " PyOCR".

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed